Pixar has long been the holy grail of pioneering in the animation field. When it comes to reinventing modern techniques, Pixar somehow always manages to come up with something unimaginably futuristic – and their newest approach to rigging is no exception.

Good Animation costs a lot

The entire animation pipeline has hardly changed since it’s inception in 1991. This is problematic, as media relies heavily on realistic, emotive characters to immerse their audience.

Games in particular have long struggled with facial animation – think Mass Effect Andromeda, or Fallout 4; this is a direct reflection of a high skill barrier and a highly competitive industry. In addition, compared to the rest of the art pipeline, software and techniques have improved massively over the years, with software such as Blender and Unreal Engine constantly updating their resources and free plug ins, which allows the creation of 3D assets to be far more intuitive and creative. On the other hand, traditional animation requires hundreds of hours of manually weighing vertices, adding keyframes and adjusting deformations, not to mention the rigging process.

In addition, games development and 3D modelling in general are steeped in free tutorials, whereas 3D and 2D animation content is often locked behind paywalls or subscription services, with many animation roles in studios requiring a closely related degree as a minimum.

The final barrier for a great animation in a game is rendering and processing cost. Allowing light to reflect dynamically on a character as it reacts and moves is typically expensive, especially when things like clothes, emotion and body language start being added. It’s for this reason that games typically only prioritise complex facial animations for cut scenes, with a far simpler facial rig and animations being used in real-time.

So, what’s the solution?

Pixar’s latest innovation, Curvenets, have been articulated on in a recent tech document. This new solution completely shakes up the current process, which is more intuitive and art-based. Pixar has long held tradition of pioneering the digital media industry – they even released their in house renderer, RenderMan, for public use.

It’s likely that Pixar will be encouraging universities and studios to begin learning the curvenet workflow as time goes on.

Wait – what is a Curvenet?

This new method uses constructed lines that contour a character’s mesh, creating a network of splines across a character mesh – called a “curvenet”. These can be used for face, body and clothes rigging; Pixar intends to use this to replace traditional skinning and bone-based methods. Previously, new approaches to rigging and animation have been notoriously fickle and offered limited control over a character, and have often proved time consuming in cleanup and post production.

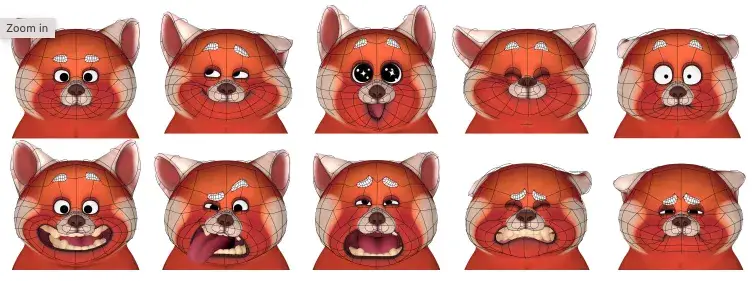

This is the first mainstream alternative to the ubiquitous spatial techniques that not only improves on skeleton-based rigging, but also improves it. Curve-based editing integrates blendshape technology into the workflow to allow animations to be characteristic, lively and fun, essentially taking advantage of those inevitable little deformations, resulting in this amazing outcome.

Pixar animators typically use home-made reference footage as reference for animating their characters, which has long contributed to the virtually perfect animation seen in their films; even in 1995, with limiting technology and software that was still in development, Pixar released the first ever digital 3D film, Toy Story. Although compared to today’s technology it may seem a bit outdated, the movie is still a stunning feat, with the animation in particular being a real stand out point for the movie, which was created by “tracing” real-life footage with 3D models, much like 2D animation has done in the preceding decades.

Curvenets have been built around this legacy of rotoscoping to help drive home the characteristic movement and near-perfectionism Pixar is known for; this spline-based methodology was developed specifically for the film Coco, and was initially used only on the fingers of characters for the close up shots playing guitar. The soft, bouncy deformations created by these curvenets are beautifully suited to this – the slightly weighted, fluid movements play into the core themes of the movie, especially the importance and beauty of making and playing music.

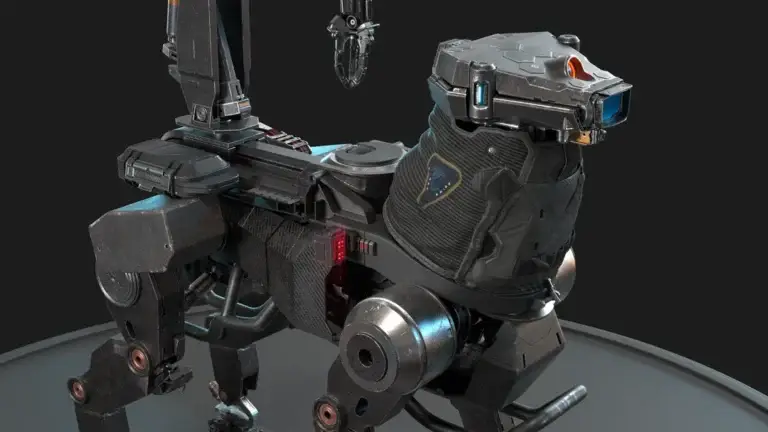

The curve-based system lends itself well to non-human characters, too; the lack of bones prevents rigid results, allowing things like octopi and ghosts to move both realistically and yet distinctively, with a strong personality feel to each animated character. Check out the difference between the octopi Stretch in the first Monsters, Inc (2001), versus the character Hank in Finding Dory (2016). Hank was animated with a prototype version of the curvenet technology, and yet still manages to capture that slick, invertebrate feeling that previous animation sequences didn’t quite reproduce.

As such, when development for Soul began, another movie featuring musical instruments and non-human characters, the curvenet technology served as the primary means of rigging and animation creation – and it worked perfectly.

What this means for animators today

Expect traditional methods for facial animation, clothes simulation, invertebrate, animates, biped and quadraped animation to be completely overhauled in the coming years. This new approach is sleek, intuitive and fast, and most of all, easier. Whilst some animation purists may disagree, it’s hard to argue with this more art-based, intuitive approach to the medium, which allows more creators to access and learn animation.

As shown below, Mei from the acclaimed Turning Red was animated with curvenets, including facial animations. The rigging process still allows for dynamic, reactive expressions that an animator can easily manipulate. The expressions are defined by certain deformitites, that when triggered make for some cool results – notice how the pupils can be scaled accordingly for different emotions?

How do you feel about curvenets? Will you be integrating them into your workflow? Let us know.